What Is Helm

Writing and maintaining Kubernetes YAML manifests for all the required Kubernetes objects can be a time consuming and tedious task. For the simplest of deployments, you would need at least 3 YAML manifests with duplicated and hardcoded values. Helm simplifies this process and creates a single package that can be advertised to your cluster. Helm is a client/server application and, until semi-recently, has relied on Tiller (the helm server) to be deployed in your cluster. This gets installed when installing/initializing helm on your client machine. Tiller simply receives requests from the client and installs the package into your cluster. Helm can be easily compared to RPM of DEB packages in Linux, providing a convenient way for developers to package and ship an application to their end users to install.

Think of Helm as combining two particular components for your whole application stack:Helm allows you to use templating language, and inject variables into your templates. This solves the problem of repeated logic, and the difficulty of finding the exact spot in the exact file for someone that isn’t familiar with the app, to change a config for your Kubernetes cluster. It also allows dynamic injection of variables - from the Values.yaml file - into your templates or Chart, instead of hardcoding the values.

Helm3 vs Helm2 and Tiller

Helm versions less than 3.0 use Tiller (More reading: https://helm.sh/docs/faq/#removal-of-tiller). Helm will look at your Templates/ Chart - look at the variables, go to the Values.yaml file, search for the specified values, find them and inject them into your templating file. Once it's got everything compromised, Helm will send this over to a component that needs to be installed on your Kubernetes cluster called Tiller.

Think of Tiller as just the server-side component of Helm. Tiller is going to take the commands you've sent with the Helm client, and turn those into something that your Kubernetes cluster is going to understand.

Removal of Tiller for Helm 3 and above

With role-based access controls (RBAC) enabled by default in Kubernetes 1.6, locking down Tiller for use in a production scenario became more difficult to manage. Due to the vast number of possible security policies, our stance was to provide a permissive default configuration. This allowed first-time users to start experimenting with Helm and Kubernetes without having to dive headfirst into the security controls. Unfortunately, this permissive configuration could grant a user a broad range of permissions they weren’t intended to have.

DevOps and SREs had to learn additional operational steps when installing Tiller into a multi-tenant cluster. After hearing how community members were using Helm in certain scenarios, we found that Tiller’s release management system did not need to rely upon an in-cluster operator to maintain state or act as a central hub for Helm release information. Instead, we could simply fetch information from the Kubernetes API server, render the Charts client-side, and store a record of the installation in Kubernetes. “Tiller’s primary goal could be accomplished without Tiller, so one of the first decisions we made regarding Helm 3 was to completely remove Tiller.”

Upgrading / Rolling Back

Helm is also very useful when scaling up or down, or upgrade or rolling back to a new configuration. Rather than taking down the application “wholemeal” and then re-deploying it with a new configuration, you can simply run:

Helm would then go through the process again of templating everything out, making sure it works the way it is supposed to, or you want it to, and get that config sent to Kubernetes so it can manage uptime as being the most important characteristic of your app. What if it doesn’t work? If you made a mistake when you upgraded or something, you can easily roll that upgrade back to the previous working version with the helm rollback RELEASE_NAME command.

Common Commands

List clusters and see if multiple clusters within helm:Important to note the commands above are using the flag --dry-run. This feature allows you to execute the command in a validation mode, enabling you to verify the installation before performing the actual install.

Helm Basic Commands

Helm Get

Get values from values file of release:Helm Repo Add

Gets Charts locally to use Helm.

Add a chart repository: - helm repo add [NAME] [URL]Under the hood, the helm repo add and helm repo update commands are fetching the index.yaml file and storing them in the $XDG_CACHE_HOME/helm/repository/cache/ directory. This is where the helm search function finds information about charts. Note: A repository will not be added if it does not contain a valid index.yaml.

Helm Show

Used to show CHART not RELEASE (for RELEASE use helm get):Install/ Upgrade

Deploys chart (created release):Manifest

Download the manifest for a named release (release is a helm chart that you have installed):Repo

List helm chart repos added from running helm repo add:Values

Gives you the current values for the specified release:Extra: Helm 2

To begin working with Helm, run the helm init command. This will install Tiller to your running Kubernetes cluster. It will also set up any necessary local configuration.

Helm2 looks in kube-system by default for Tiller, if in another Namespace - must run:

Helm2 Testing/ Validation

Helm Terms

Chart:

- A (local) package is called a Chart in Helm.

- A package of pre-configured Kubernetes resources.

- A Chart contains templates, which contain the kubernetes resources.

- All of the templates together make up the Helm Chart.

Release:

- A specific instance of a chart which has been deployed to the cluster using Helm.

- A release is an “active” chart, that is deployed to a Kubernetes cluster.

Installation:

- An installation of a chart is a specific instance of the chart.

- Helm needs a way to differentiate between different versions.

- One Chart may have many installations.

Repository:

- A group of published charts which can be made available to others.

- A repository is a place - usually a HTTP server - where charts are collected and shared. A local directory can be used as Helm repository too, charts can refer to their dependencies using a file://... syntax. The charts maintained and exposed by repository in an archived format.

- A repository must expose in its root an index.yaml file that contains a list of all packages supplied by repository, together with metadata that allows retrieving and verifying those packages.

- When Helm is installed, it is pre-configured with a default repository. New repositories an be added. The list of locally-configured repository can be inspected with helm repo list. Repositories can be searched for charts with helm search. Because chart repositories change frequently, it is recommended to update the local cache.

- Using Helm repositories is recommended practice, but they are optional. A Helm chart can be deployed directly from the local filesystem.

- Charts are stored and exposed in repositories as chart archives whose name include the chart name and version.

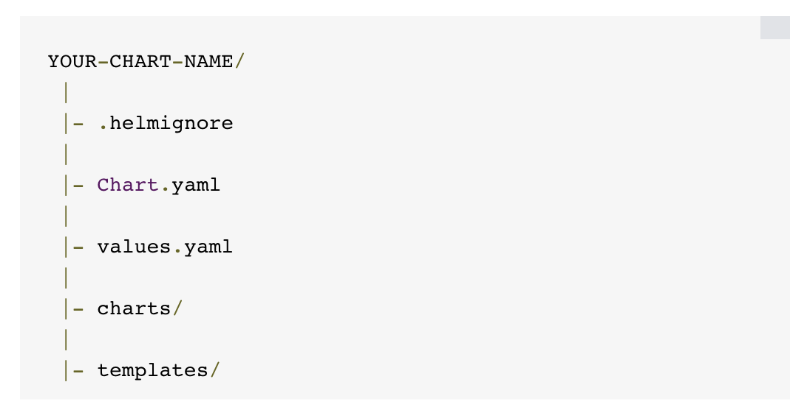

File Structure

- .helmignore: This holds all the files to ignore when packaging the chart. Similar to .gitignore, if you are familiar with git.

- Chart.yaml: This is where you put all the information about the chart you are packaging. So, for example, your version number, etc. This is where you will put all those details.

- Values.yaml: This is where you define all the values you want to inject into your templates. If you are familiar with terraform, think of this as helms variable.tf file.

- Charts: This is where you store other charts that your chart depends on. You might be calling another chart that your chart need to function properly.

- Templates: This folder is where you put the actual manifest you are deploying with the chart. For example you might be deploying an nginx deployment that needs a service, configmap and secrets. You will have your deployment.yaml, service.yaml, config.yaml and secrets.yaml all in the template dir. They will all get their values from values.yaml from above.

Written: August 2025